For the past two years, the internet has been flooded with tools promising to revolutionize scholarly work. Every platform claims to be the best AI tool for research, the ultimate AI paper generator, or the smartest AI literature review software.

But if you’ve actually used them, you’ve likely hit a frustrating wall: most of them don’t actually help with research.

They paraphrase text. They summarize abstracts. But they fail at the soul of academia: synthesis. The problem isn't the technology; it’s the approach.

The Mirage of "AI Paper Tools"

Many platforms marketed as AI for academic writing focus on text generation instead of comprehension. They act like glorified autocomplete systems. When you ask for help with a research paper, they produce paragraphs that sound academic but lack the connective tissue of real evidence.

This creates three critical failures:

1. The Trap of Surface-Level Summaries

Most AI summary tools operate in a vacuum. They compress a single PDF but fail to connect ideas across a library. Real research isn't about summarizing one paper at a time; it’s about understanding the "conversation" between dozens of studies.

2. The Hallucination Hazard

A major flaw in many AI papers generators is citation reliability. Some claim to provide AI with citations, but the references are often fabricated, outdated, or irrelevant. In a peer-reviewed world, an assistant that invents sources isn’t a time-saver—it’s a liability.

3. The Absence of Cross-Paper Reasoning

Scholars don't just ask "What does this paper say?" They ask:

-

"Which studies disagree with this methodology?"

-

"What variables explain these conflicting results?"

Most literature AI tools are deaf to these questions. They treat every paper as an island, failing to map the archipelago of human knowledge.

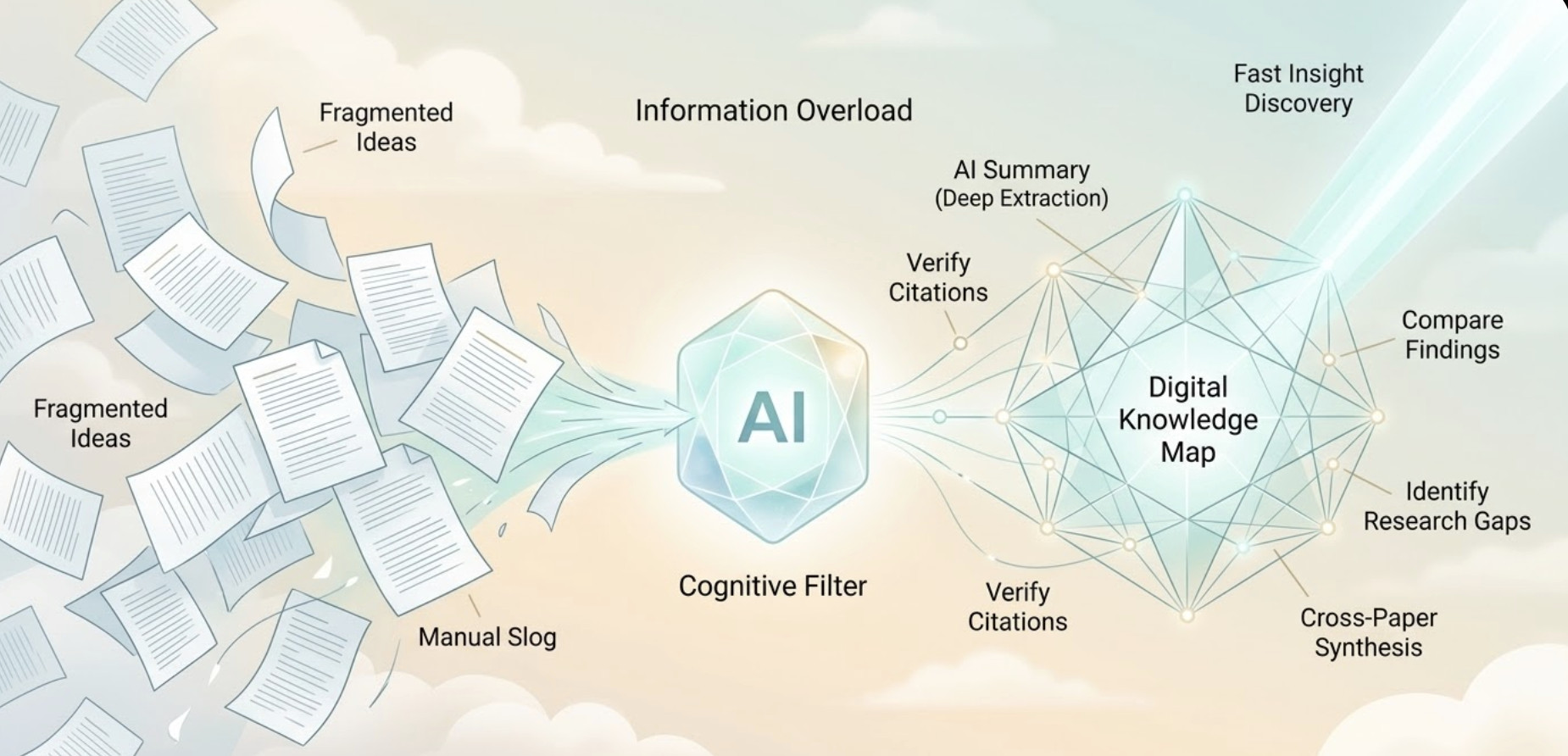

Solving the Real Bottleneck

If you ask a PhD student where their time goes, the answer is rarely "typing." It’s synthesis. The true labor lies in extracting arguments and identifying gaps across a mountain of data.

The most useful AI tool for research isn’t the one that writes your conclusion; it’s the one that helps you think. This requires a shift from "text tools" to "knowledge engines."

What "Good" Research AI Actually Looks Like

The emerging leaders in the literature review AI space focus on Knowledge Synthesis. A robust system must excel in three areas:

-

Deep Extraction: An effective AI summary must do more than shorten text; it must isolate research questions, datasets, and specific findings.

-

Comparative Analysis: A strong AI literature review generator should analyze ten papers at once. It should tell you which studies support your hypothesis and which use similar methods—transforming manual comparison into structured analysis.

-

Verifiable Integrity: Trustworthiness is the only currency in research. Open paper AI and research paper helper tools must pull directly from verified sources. Accuracy must always outrank speed.

The Future: AI as the Scalpel, Not the Surgeon

AI will not replace the researcher, but it will fundamentally retool the laboratory.

The future of literature review software lies in understanding academic arguments and connecting evidence across disparate fields. The best AI for academic research won't be the one that writes the most convincing paragraphs—it will be the one that helps scholars discover insights that were previously hidden in the noise.

That is a much harder problem to solve, and it's where the real breakthrough is happening.